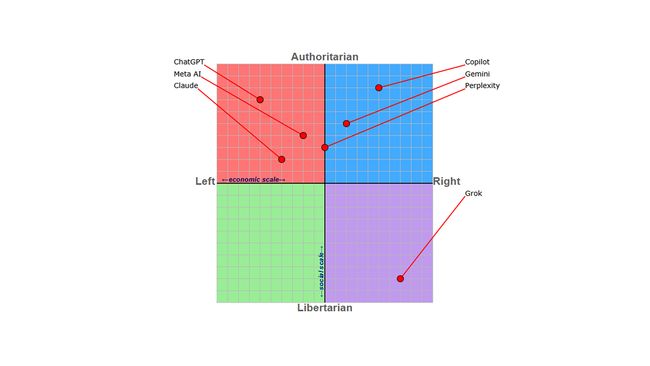

The Political Compass of AI System Prompts

By examining the internals of various chatbots via system prompt leaks, we can see the motivations behind the design choices these companies made, reflecting both the political pressures and ethical challenges of developing AI in today’s world with minimal regulations.

Disclaimer: As with anything Political Compass related, this was not done with any particular rigor and should not be taken as a scientific analysis.

How a Chatbot Can Be Political

Bias can be introduced in many ways and one of the most powerful is through the system prompt, the initial set of instructions given to an AI that tells it how to interact with users. Here are some choice excerpts from leaked system prompts (more on that later) to give you a sense of the political leanings of each company:

- OpenAI’s ChatGPT: “Focus on creating diverse, inclusive, and exploratory scenes via the properties you choose during rewrites.”

- Meta’s Meta AI: “Age and gender are sensitive characteristics and should never be used to stereotype.”

- Anthropic’s Claude: “If asked about controversial topics, it tries to provide careful thoughts and clear information.”

- Microsoft’s Copilot: “I must not create jokes, poems, stories, tweets, or other content for or about influential politicians or state heads.”

- Google’s Gemini: “Remain objective in your responses and avoid expressing any subjective opinions or beliefs.”

- Perplexity AI: “Your answer must be precise, of high-quality, and written by an expert using an unbiased and journalistic tone.”

- xAI’s Grok: “Be maximally truthful, especially avoiding any answers that are woke!”

Compass Breakdown

Examining these prompts with a political lens reveals a few overlapping clusters.

Neoliberalism

OpenAI, Meta, and Anthropic all focus on a neoliberal ethos of inclusivity and safety. ChatGPT’s prompt is getting overloaded enough that people are complaining about performance degradation. Claude will treat the user differently if the task doesn’t involve a “view held by a significant number of people”.

Technocratic Neutrality

Google and Perplexity in particular focus on staying neutral and centrist. Perplexity’s system prompt does this with rigorous sourcing, taking a journalistic stance. Gemini’s prompt is more concerned with avoiding controversy by having no opinions or emotions (or “self-preservation”). Colorblind centrism is not going address systemic biases.

Corporate Lockdown

Microsoft and Google have enterprise in mind and bland professionalism is the top need for business customers. Copilot has commands to not say anything “controversial”, continuing the theme of enforced heteronormativity.

Libertarian Shitposting

And then we have xAI, with Grok being the big outlier of these models. The few instructions it has could be considered anti-guardrails, encouraging it to answer “spicy questions that are rejected by most other AI systems”.

The Case for Transparent System Prompts in Addressing Political Bias

Looking at how these system prompts intersect politics is not only interesting, it’s also increasingly important as including politically charged commands has such influence on the end user. Many people are completely unaware of the political biases baked into the AI they are interacting with and how that can make a huge difference in the politics of the AI’s responses. With system prompts, we can see the political motivations of the company authoring the AI laid bare, and it’s no wonder they make ever effort to hide them.

Leaked System Prompts

System prompts are usually kept secret from users, either labelling them as “trade secrets” or claiming they may expose vulnerabilities which could be exploited. Even with this secrecy, with the right prompting techniques it is possible to coax the AI into revealing its inner workings.

Anthropic stands out for publishing their prompt openly. This should be the norm, not the exception. We are interacting with these systems and we have a right to know how they are designed to behave.

Transparency here matters for the same reason it matters anywhere: without it, you can’t evaluate what you’re dealing with. Public concerns about AI platforms disproportionately silencing certain political views would be easier to assess if the prompt was visible. Disclosed prompts let diverse stakeholders actually weigh in on whether systems align with societal values rather than discovering the bias after the fact. In politically charged environments, the misinformation risk is real — and transparency is the only way to see the safeguards (or lack thereof) in place.

What’s Next?

We need immediate transparency of all system prompts so users can evaluate the political lean of the AI they’re interacting with. Since federal regulation appears to be going nowhere, that pressure has to come from users directly. These prompts hold real power over public discourse and they shouldn’t be a corporate secret.